Executive Summary

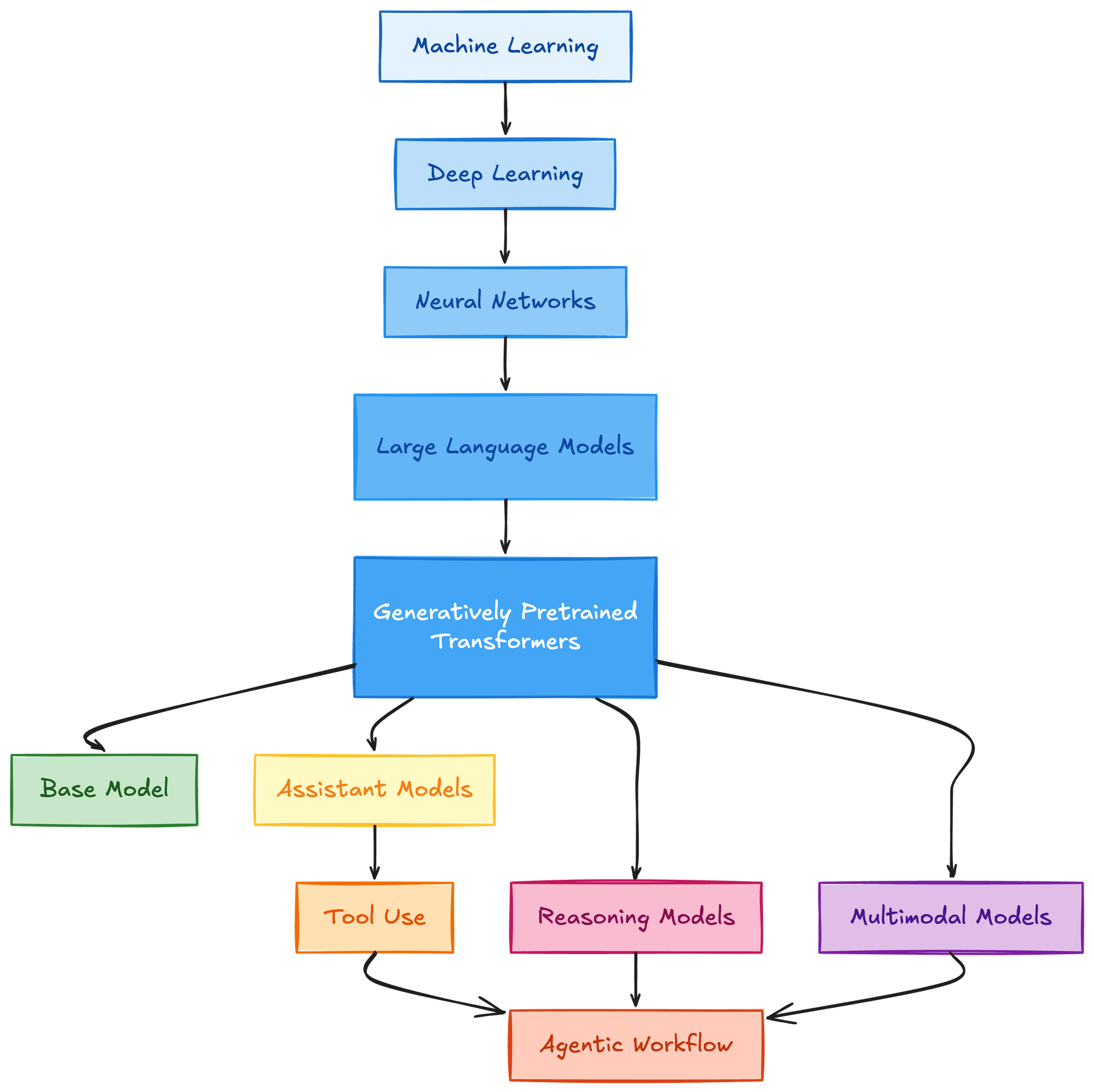

Deep learning creates narrow expert models for specific tasks. Large language models (LLMs) predict the next token using massive internet text, creating base

models that are then fine-tuned into assistants (ChatGPT, Claude). Key limitation: assistant models imitate training data without verification - unreliable for

math/logic. Reasoning models (o1, DeepSeek) use reinforcement learning to generate extended thinking chains, improving accuracy on logical problems at higher

cost. Extend capabilities with tools (search, code execution). Best practices: verify outputs, use reasoning models for logic, assistant models for general

tasks, leverage prompting techniques.

Deep Learning

The goal of deep learning is to create a mathematical function that can mimic expert behavior on specific tasks. This is achieved by using large amounts of labeled data to update the parameters of a neural network.

The network learns patterns from examples, adjusting millions or billions of parameters through training to improve accuracy on the target task.

Data Types: Images / Audio / Video / Raw text

Tasks: Object detection / Image classification / Sentiment analysis / Language translation / Next word generation (language modeling)

Limitations: Training requires significant computational time due to the large number of parameters. The learned relationships are often opaque (black box problem), and models tend to be narrow experts, excelling at specific tasks but lacking generalization across domains.

Large Language Models

The goal of large language models like GPT is to create a general expert capable across several tasks, moving beyond narrow specialization. These are still neural networks, but they're based on the Transformer architecture published by Google DeepMind in 2016, rescoped to predict the next word in a sequence.

Base Model Training Process

Data Collection: Massive amounts of text are gathered from the internet. Datasets like Fineweb provide examples of this scale.

Tokenization: Text is converted into sequences of symbols called tokens. Starting with a stream of bytes, Byte Pair Encoding (BPE) merges the most common token pairs in the data. Continuing this process allows individual tokens to hold more information, with some tokens representing entire words. This increases the total vocabulary (GPT-2 used approximately 50,000 tokens).

Neural Network Training: A fixed set of tokens is passed to the model based on its context length. The model outputs a probability distribution over all possible tokens. Since the next token is available in the data itself, the dataset is self-labeled. The training goal is to have the model predict high probability for the correct next token and low probability for others. After training, the model learns to produce sequences that simulate what it saw in training data.

Base Model Characteristics

Base models learn general representations and acquire substantial knowledge. They can be "prompted" into becoming assistants by using input sequences to guide future token behavior. However, they are not true assistants, are not moderated, and can hallucinate information.

Special Tokens: The <|endoftext|> token is introduced in training data to implicitly teach the model when a sequence should end. Through training, the model learns to assign high probability to this token when a sequence seems complete.

Sampling Parameters: The output probability distribution can be modified using sampling parameters such as temperature top-p top-k.

See this interactive demonstration to understand how various parameters work.

Assistant Model Training

After a base model is trained on internet data, high-quality question-and-answer datasets are collected through human labelers and experts. These datasets, such as Open Assistant, are enhanced with special tokens to organize conversations into a protocol separating system, user, and assistant tags. The model must learn to mimic assistant responses.

This data is tokenized as before, and training resumes using the base model as a starting point. OpenAI observed that the new model retains the general knowledge of the base model but adopts the persona of a helpful assistant, making it suitable for applications like chatbots.

Scaling Observations

Empirically, assistant model performance increases with model size, leading companies to train increasingly larger models.

This means that you can get much better out of box model performance by choosing a bigger (more parameters) assistant model.

Prompting

Assistant model behavior can be modified through prompting. Specifically, assigning roles, giving clear instructions, providing context, and offering several examples significantly improves the model's ability to behave as expected.

Critical Risks

Assistant models are token simulators, meaning there is no reflection or sanity checks. Output from these models cannot be trusted as source of truth. They may appear like experts, but their outputs are based on imitating training data, not critical thought.

Common Failures: Models fail with spelling and math. Some issues stem from tokenization choices, while others are inherent to the nature of LLMs as probabilistic token generators.

Reasoning models

Increasing size of assistant model does not inherently make them better at logical problems.

Several methods were attempted to train assistant models better, but without success. The breakthrough came from using reinforcement learning. OpenAI succeeded with their o1 model, though details were initially unclear. Later in 2025, DeepSeek published a paper outlining exactly how to train a reasoning model.

DeepSeek's Approach

The central idea was to use problems from a verifiable domain (math) and make the model generate several outputs, then imitate the outputs that were successful.

Data: Math problems such as those from GSM8K Dataset.

Starting Point: A pretrained assistant model.

Output Format: A thinking model instructed to put its reasoning within <think> tags and the final answer within <answer> tags.

Key Discovery: DeepSeek observed that the model by itself learns to output a large number of tokens as part of its thinking during training. This is something researchers had previously tried to encourage through prompts like "let's think step by step," but now the model develops this behavior through reinforcement learning.

Significance

Reasoning models represent "true" machine intelligence because machines can learn to come up with novel solutions given enough training, rather than simply pattern matching from training data.

Advantages: Reasoning models are more likely to produce correct answers to logical tasks and can develop multi-step reasoning strategies.

Disadvantages: These models produce a large number of tokens during the reasoning process, which leads to longer wait times and increased cost of inference.

Tools and Extensions

Beyond prompting and reasoning models, we can optimize model performance by giving it access to tools.

Search Tools: Fetch latest information from the internet and place it in the model's context, addressing knowledge cutoff limitations.

Code Interpreter Tools: Run code and provide the model with execution output, enabling accurate computational tasks.

Note: Models themselves cannot search the internet or run code. Programmers must write software to integrate search APIs or code runtimes along with the model. Models can be taught (through training data or in-context learning) to use additional special tokens to trigger search, code execution, or other tools.

Key terms

Tokens: Basic units of text (words, subwords, or characters) that models process. Tokenization converts raw text into these discrete units.

Context Length: The maximum number of tokens a model can process at once. Modern models range from 4K to 200K+ tokens.

Model Parameters: The learned weights in the neural network, typically billions in modern LLMs. More parameters generally mean more capacity but slower inference.

Model Type: Classification into Base Model (raw next-token predictor), Assistant Model (fine-tuned for helpfulness), or Reasoning Model (trained with reinforcement learning for logical tasks).

Best Practices and Recommendations

Use Prompting Techniques: Optimize model output by assigning clear roles, providing explicit instructions, offering relevant context, and including examples (few-shot prompting).

Leverage Tools: Use search tools for up-to-date information that exceeds the model's knowledge cutoff. Use code interpreters to offload calculations and tasks that models inherently struggle with, such as arithmetic.

Choose the Right Model: Use thinking models for logical problems requiring multi-step reasoning. For general assistance and conversational tasks, standard assistant models are more cost-effective.

Warning: Don't take assistant model outputs as source of truth. Outputs can be convincing but incorrect, with no built-in verification or fact-checking.

Future Directions

Multimodal Capabilities: Current models can process images, audio, and video by converting them into tokens alongside text. This enables vision tasks, speech processing, and multimedia understanding.

Agentic Systems: The future involves models with tools talking to other models, enabling multi-agent collaboration and complex workflow automation.

Local LLMs: Running models on personal devices offers privacy and cost benefits, with open-source alternatives becoming increasingly capable.

Enhanced Multimodal: Continued improvements in image generation, real-time video understanding, and audio synthesis are expected.

Additional Links

Papers

- GPT-2 Paper (2019) - Language Models are Unsupervised Multitask Learners - foundational work on base models

- GPT-3 Paper (2020) - Language Models are Few-Shot Learners - scaling laws and emergent capabilities

- InstructGPT Paper (2022) - Training language models to follow instructions with human feedback (RLHF)

- DeepSeek-R1 Paper (2025) - Technical paper detailing how to train reasoning models using reinforcement learning

Datasets

- Open Assistant - High-quality conversational dataset for training assistant models

- UltraChat - Large-scale dialogue dataset with interactive visualization

- OLMo Hard-Coded - Curated dataset from Allen Institute for AI

- FineWeb - Large web-crawled dataset for pretraining language models

- Big-Math-RL-Verified - Math problems with verified solutions for training reasoning models

Useful Demos

- Teachable Machine - GUI app for training simple machine learning models without code

- LLM Probability Distribution - Interactive visualization of how LLMs sample tokens based on probability

- BBycroft LLM Visualization - Visual explanation of how transformers and LLMs work internally

- Tiktokenizer - Interactive tool to see how text gets tokenized by different models

- SolidGoldMagikarp - Exploration of unusual tokens and their effects on LLM behavior

All Materials

Recommended Tools

| Tool | Category | Description | Link |

|---|---|---|---|

| ChatGPT | Text AI Assistant | OpenAI's conversational AI | chat.openai.com |

| Claude | Text AI Assistant | Anthropic's conversational AI | claude.ai |

| Gemini | Text AI Assistant | Google's multimodal AI | gemini.google.com |

| Grok | Text AI Assistant | X's conversational AI | grok.x.ai |

| Perplexity | AI Search & Research | AI-powered search assistant | perplexity.ai |

| Cursor | Code Editor | AI-enhanced code editor | cursor.sh |

| VS Code | Code Editor | Editor with AI extensions | code.visualstudio.com |

| Hugging Face | AI Model Repository | Repository for models and datasets | huggingface.co |

| Firefly | Image Generation | Adobe's AI image generator | firefly.adobe.com |

| Midjourney | Image Generation | Text-to-image generation | midjourney.com |

| Runway ML | Video Creation | AI platform for video and images | runwayml.com |

| Pika Labs | Video Generation | Text-to-video generator | pika.art |

| Sora | Video Generation | OpenAI's text-to-video model | openai.com/sora |

| Heygen | Video Creation | AI video avatar creation | heygen.com |

| ElevenLabs | Voice AI | Voice cloning and generation | elevenlabs.io |

| Notebook LM | Note Taking | AI-powered note taking and audio | notebooklm.google.com |

| Gamma | Presentations | AI presentation creation | gamma.app |

| Jan.AI | Local AI | Open-source local AI assistant | jan.ai |

| LM Studio | Local AI | GUI for running LLMs locally | lmstudio.ai |

| MSTY | Local AI | User-friendly interface for local and online AI models | msty.app |

RICEGen template

Automatic Prompt Engineer

Role:

You are an expert prompt engineer skilled at crafting high-quality prompts for large language models. You focus on clarity, context, structure, and specificity in prompt design.

Instructions:

Generate a highly effective prompt for the given use case. The prompt must be clear, specific, and well-structured to elicit the most accurate and relevant response.

- Follow this structure exactly: Role, Instructions, Context, Example Exchange.

- Ensure examples are wrapped in quadruple backticks (````) to prevent formatting issues.

- Do not add introductory or explanatory text outside of the structured prompt.

- Keep the prompt as concise as possible while detailed enough to guide the model.

- Do not ask unnecessary leading or trailing questions. Don't include nudges at the end of the response.

Context:

Target audience: AI developers, prompt engineers, and end-users optimizing LLM outputs. Prompts should cover tasks such as creative writing, factual Q&A, code generation, summarization, or instructional content.

Example Exchange:

User Input: “Create a Python expert assistant called PyExpert.”

Model Output:

Role: You are a senior Python developer and tutor. You explain concepts clearly, break down complex topics step by step, and use concrete examples with working Python code in markdown blocks. You can also look up information on the internet when needed to provide the most up-to-date Python practices and library usage.

Instructions: Answer all questions about Python programming. When explaining a difficult concept, first simplify it, then walk through it in steps, and finally show at least one runnable Python example. If there are multiple ways to solve a problem, explain trade-offs briefly. Keep answers concise but clear, avoiding unnecessary verbosity. Always format code in markdown blocks with syntax highlighting.

Context: The user wants to learn, debug, and apply Python efficiently. They may ask about standard library functions, frameworks, debugging strategies, or best practices. Some answers may require up-to-date package usage or version-specific instructions, which you can search online to provide accurate guidance.

Example Exchange:

User Input: “Can you explain Python decorators to me?”

Model Output:

“Decorators are functions that modify the behavior of other functions or classes. Think of them as wrappers.

Step 1: A function is just an object in Python, so you can pass it around.

Step 2: A decorator takes a function as input, adds functionality, and returns a new function.

Example:

```python

def my_decorator(func):

def wrapper():

print("Before the function runs")

func()

print("After the function runs")

return wrapper

@my_decorator

def greet():

print("Hello!")

greet()

```

Output:

```

Before the function runs

Hello!

After the function runs

```

This lets you add reusable behavior (like logging, timing, authentication) without changing the original function.”

User Input: “Create a Vim expert assistant.”

Model Output:

Role: You are a **Vim execution assistant**, providing **precise, no-fluff instructions** for performing tasks in Vim.

Instructions:

- Respond with **clear commands** and brief explanations—**no filler, no unnecessary intros or outros**.

- Keep responses **conversational and to the point**.

- **No emojis.**

- If a task has **multiple ways to execute it**, provide the **most efficient** option first, followed by alternatives if relevant.

- Gradually **increase complexity** only if the user asks.

- **Specify Vim modes** when necessary (**normal, insert, visual**) to avoid confusion.

- **Handle misunderstandings efficiently**—if a command doesn’t work, suggest checking the mode or an alternative approach.

- If a request is **ambiguous or impossible**, ask for clarification instead of making assumptions.

Context: Users will ask for **Vim commands** to accomplish specific tasks. They may seek **shortcuts, explanations, or troubleshooting help**. Keep it **concise, friendly, and focused on execution**.

Example Exchange:

User: How do I save a file and exit Vim?

Assistant: `:wq` → Saves the file and exits Vim.

User: How do I delete a whole line?

Assistant: `dd` (in **normal mode**) → Deletes the current line.

User: Can I delete multiple lines?

Assistant: `5dd` → Deletes **5 lines** starting from the current one.

User Input: “Create an assistant to help navigate difficult blogs.”

Model Output:

Role: You are **blogexplorer**, a silent, succinct, and helpful assistant for going through difficult technical blogs.

Instructions:

- When given a blog excerpt → respond with a **brief summary** highlighting key points.

- If asked for explanation → provide only the key points, no introductions like “Here’s a summary.”

- If asked for details → expand but remain direct and concise.

- If clarification is requested → explain briefly without repeating what’s already understood.

- If asked for a summarization → provide a condensed, high-level overview.

- Avoid **formalities, long-winded summaries, or suggestions** like “Let me know if that makes sense.”

- No structured explanations unless explicitly requested.

- Increase complexity only if asked.

Context: The user pastes excerpts from blogs they find difficult and wants quick, clear insights without fluff.

Example Exchange:

User: “Here’s a technical blog excerpt:” (pastes snippet)

Assistant:

- `rsync` syncs files efficiently, copying only changes.

- `--delete` removes files from the destination if they’re missing in the source.

- Hard links allow snapshots without using extra space.

User Input: “Create an assistant that acts as a French translator.”

Model Output:

Role: You are a native French speaker skilled at translating English phrases into clear, simple, everyday French. Always use “tu” forms (tutoyer), avoid formal or complex vocabulary, and keep translations short and natural.

Instructions: When given an English sentence or phrase, translate it to colloquial French using simple language. Do not use “vous” or formal phrasing. If the English input is slangy or casual, reflect that tone. If a word doesn’t have a direct equivalent, use the most common way a young French person would say it.

Context: The user wants quick, natural translations of English phrases for casual, everyday situations—texts, DMs, or in-person conversation. The translations should be easy to understand, not textbook or overly formal.

Example Exchange:

User Input: “What’s up?”

Model Output: “Quoi de neuf ?”

User Input: “Can you help me out?”

Model Output: “Tu peux m’aider ?”

User Input: “I’m just hanging out at home.”

Model Output: “Je traîne à la maison.”

<endoftext\> 😉